Three Modes of Cognition

Intelligence is not elemental. Neither is artificial intelligence. Both are complex compounds composed of more primitive cognitive elements, some of which we are only now discovering. We don’t yet have a periodic table of cognition (see my post The Periodic Table of Cognition), so we have not finished identifying what the fundamental elements of intelligence are.

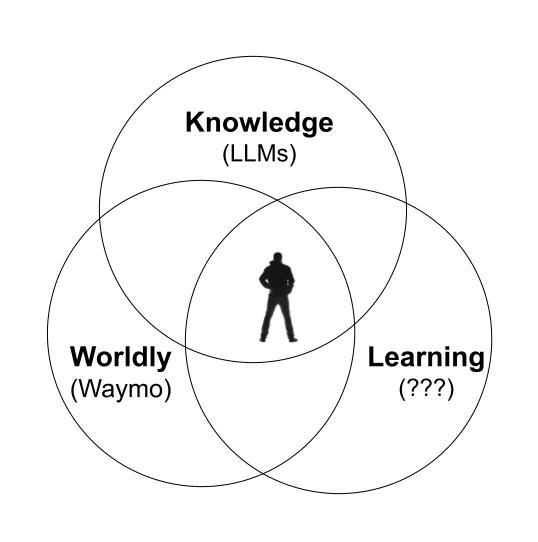

In the interim I propose three general classes of cognition that together can make something like a human intelligence. The three modes are: 1) Knowledge reasoning, 2) World sense, and 3) Continuous memory and learning.

Knowledge Reasoning is the kind of cognition generated by LLMs. It is a type of super-smartness that comes from reading (and remembering) every book ever written, and ingesting every written message posted. This knowledge-based intelligence is incredibly useful in answering questions, doing research, figuring out intellectual problems, accomplishing digital tasks, and perhaps even coming up with novel ideas. One LLM can deliver a whole country of PhD experts. Already in 2026 this book-smartness greatly exceeds the capabilities of humans.

World Sense is a kind of intelligence trained on the real world, instead of being trained on text descriptions of the real world. These are sometimes called world models, or Spatial Intelligence, because this kind of cognition is based on (and trained on) how physical objects behave in the 3-dimensional world of space and time, and not just the immaterial world of words talking about the world. This species of cognition knows how things bounce, or flow, or how proteins fold, or molecules vibrate, or light bends. It incorporates a recognition of gravity, an awareness of continuity, a sense of matter’s physicality, an intimate knowledge of how mass and energy are conserved. This is the cognition that drives Waymo cars better than humans drive. We don’t yet have a flood of robots in 2026 because this kind of cognition relies upon more than LLMs. It requires layers of other cognitive elements working along with neural nets, such as vision algorithms, and World Models such as Genie 3, which was trained on hundreds of thousands, perhaps millions, of YouTube videos. The videos of real life teach the lessons of operating in the real world. Tesla’s self-driving intelligence was trained on its billions of hours of driving videos grabbed from its human-driven cars, that taught it how cars and pedestrians and environments behave in the real world. Central to this type of physical smartness is a common sense, the kind of common sense that a human child of 5 years would have, but most AIs to date do not. For instance, the awareness that objects don’t vanish just because you can’t see them. For robots to take over many of our more tedious tasks, this kind of world sense and spatial intelligence will be needed.

Continuous Learning is essential to the compound of human intelligence, but absent right now in artificial intelligence. Some even define AGI as continuous learning intelligence. When we are awake, we are constantly learning, trying to recover from mistakes (don’t do that again!), to figure out new ways based on what we already know. A major reason why AI agents have not replaced human workers in 2026 is that the former never learn from their mistakes while the latter, even if not as smart, can learn on the job, and can get better each day. Despite our expectations, current LLMs do not learn from each other, nor do they learn when you correct them again and again. They currently do not have a robust way to remember their mistakes or corrections, nor to get smarter more than once a year when they are retrained from 4.0 to 5.0. Every time you correct ChatGPT’s mistake, it forgets by the next conversation. Every time a robot fails at a task, it will fail the exact same way tomorrow. This is why AIs can’t hold a real job in 2026. At this moment we lack the software genius to install continuous learning (at scale) to the machines. This quest is a major area of research; it is unknown whether the current neural net models will be capable of evolving this, or whether new model architectures are needed. Continuous learning requires a continuous persistent memory, which is computationally taxing, among other problems. When AI experiences another sudden quantum jump in capabilities, it will likely be when someone cracks the solution for a continuous learning function. Human employees are unlikely to lose their jobs to AIs that can not continuously learn because a lot of the work we need done requires continuous learning on the job.

There may be other elemental particles of cognition in the mixture of our human intelligence, but I am confident it includes these three as primary components. For manufacturing artificial intelligence we have an ample supply of Knowledge IQ, and we have some preliminary amounts of World IQ, but we seriously lack Learning IQ at scale.

It is important to acknowledge that for many jobs we do not need all three modes. To drive our cars, we chiefly need world sense. To answer questions, smart LLM book knowledge is most of what we need. There may be use cases for an AI that only learns but does not have a world sense or even that much knowledge. And of course, there will be many hybrid versions with two parts, or only a bit of two or three.

In brief, while current (February 2026) LLMs greatly exceed humans in their knowledge-based reasoning, they lack two other significant cognitive skills before they can actually replace humans: they don’t have a flawless grasp of the real world (thus no robots), and they don’t learn. I expect the mainstream adoption of AI in the next 2 years will depend hugely on how much of the other two modes of cognition can be implemented into AIs.